Some things are a numbers game: retail sales, top-flight athletics, fund management. There are standard yardsticks, you can compare the players, and there’s intense competition.

Some things clearly aren’t like that: teaching, poetry, social care, research science, political lobbying maybe. That’s not to say that people don’t try, or that there aren’t measurable factors or reasonable proxies for some of those factors.

So where does digital communication in government sit? Traditionally, a marketing communications discipline, it’s been an awkward fit between those from commercial marketing backgrounds who expect a quantifiable return on investment; and those from a information, behavioural psychology or news backgrounds, who don’t, really. Throw in the fact that done well, it’s a highly innovative field of work with relatively few industry conventions, and you’ve got a real challenge for evaluating success.

Stephen Hale is in characteristically thoughtful and practical mode over on his new work blog, on the subject of how to evaluate how successful his team has been in its stated aim of becoming the most effective digital communication operation in government. Knowing that no measures are perfect but you have to gather some data in order to have an objective evaluation, his team have identified 13 measures to help them monitor their progress against that goal, and he’s blogged about them with impressive openness.

I’ve always struggled to find ways to articulate the goals for the teams I’ve been part of, and universally failed to define suitable KPIs. But Stephen’s post motivated me to try, and I think that’s partly because I’m not entirely comfortable with the conclusions he reached. What follows from me here, therefore, is a mixture of half-formed ideas and rank hypocrisy, as all good blog posts are.

Stephen’s indicators are as follows:

1. Comparison to peers. KPI: Mentions in government blogs

2. Digital hero. KPI: Sentiment of Twitter references for our digital engagement lead

3. Efficiency. KPI: Percentage reduction of cost-per-visit in the 2011 report on cost, quality and usage

4. Types of digital content. KPI: Number of relevant results for “Department of Health” and “blogs” in first page of google.co.uk search

5. Audience engagement. KPI: Volume of referrals to dh.gov.uk

6. Platform. KPI: Invitations to talk at conferences about our web platform

7. Social media engagement. KPI: Volume of retweets/mentions for our main Twitter channel

8. Personal development. KPI: Number of people in the digital communication team with “a broad range of digital communication skills” on their CV.

9. Internal campaign. KPI: Positive answer to the question: “Do you understand the role of the Digital communications team?”

10. Staff engagement. KPI: Referrals to homepage features (corporate messages) on the staff engagement channel

11. Solving policy problems. KPI: Number of completed case studies showing how digital communication has solved policy problems

12. News and press. KPI: Number of examples of press officers including digital communication in media handling notes

13. Strategic campaigns. KPI: Sentiment of comments about our priority campaign on target websites

Clearly, there’s more thinking behind this than just these KPIs, and I don’t want to unfairly characterise this pretty decent list as a straw man or pick on the individual items. So I tried to frame this instead from asking a more basic question: what actually makes a government digital team effective? And for me, I think there are three key aspects:

Impact

- how wide is the reach of the team’s work?

- does it accurately engage the intended audiences?

- what kind of change or action results from its work?

Productivity

- how efficiently are goals achieved, in terms of staff time and budget?

- how skilled and motivated is the team?

- how successful is the team at maintaining quality and its compliance obligations?

Satisfaction

- how satisfied are the target audiences with the usefulness of the team’s work?

- how satisfied are internal clients with the contribution the team’s work makes to their own?

- what reputation does the team have with external stakeholders and peers?

In terms of coverage, I don’t think there’s a great deal of difference between my list and Stephen’s. But the key challenge with my list is that I’d struggle to define meaningful KPIs for many of those criteria. To me, that’s a reason to find more valid ways of evaluating performance than KPIs, rather than to use the KPIs that are readily measurable.

In fact, I think I’d go as far as to argue that given the kind of innovative knowledge work a government digital team does, probably the majority of its approach to evaluation should be qualitative, getting the participants and customers to reflect on how things went and how they might be improved – and resist the pressure to generate numbers. Partly, I think that’s because those numbers often lack relevance, and are weak proxies at best for the often complex goals and audiences involved. Often, they are beyond the control of the team to influence. But more importantly, even as part of a really well balanced scorecard approach, they distort effort and incentives by providing intermediary goals which aren’t directly aligned with the real purpose of the team. There’s a great discussion of this in DeMarco and Lister’s Peopleware – a real classic in how to manage technology teams.

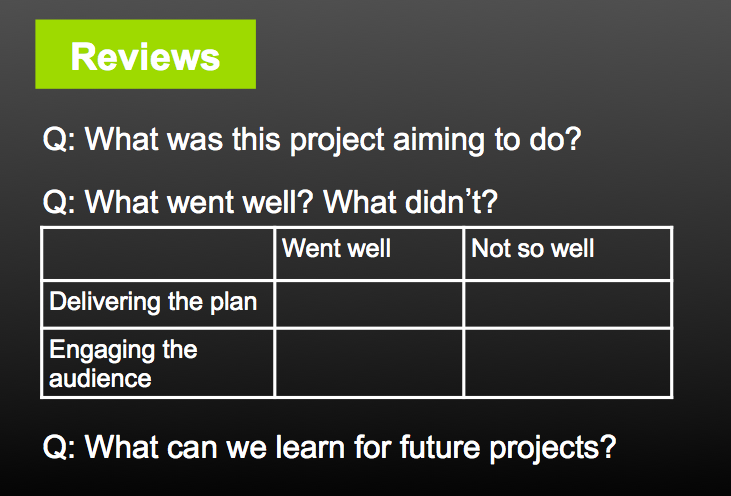

One such qualitative review process I tried (somewhat half-heartedly) to introduce in one team I worked in was the idea of post-project reviews centred around a meeting to discuss three questions, looking not only at the outcomes of the project, but about how we felt about the process and what we could learn from it:

How do you gather this feedback? Well, collecting emails is one way. A simple, short internal client feedback form sent after big projects and to regular contacts is another. Review meetings focussed on projects are pretty important. And asking for freeform feedback from customers whether via comment forms, ratings, emails or Twitter replies is pretty vital too. By all means monitor the analytics and other quantitative indicators, but use them primarily as the basis for reflection and ideas for improvement. Then make the case aggressively to managers that it’s on the basis of improvement or value added that the team should really be judged.

Oddly, I think there is an exception to this qualitative approach, and that’s in quite a disciplined approach to measuring productivity. The civil service isn’t generally great at performance management (and many corporate organisations aren’t, to be fair). But in the current climate, being able to measure and demonstrate improvement in the efficiency of your team is really important. I’m in the odd minority who believe in the virtue of timesheets, as a way of tracking how clients and bureaucracy use up time, rather than as a way of incentivising excessive hours. If adding a consultation to your CMS takes a day, or publishing a new corporate tweet involves three people and an hour per tweet to draft and upload, it’s important to know that and tackle the underlying technological and process causes.

But productivity as I think about it is also about a happy, motivated team working at the edge of their capabilities, as part of a positive and supportive network. Keeping an eye on that is really about good day-to-day management rather than numbers.

Three cheers for Stephen and his team for demonstrating the scope of their work and identifying measurable aspects of their performance against it. I’m on uncertain ground here, as I’m not so naïve as to think that team performance in some organisations (not necessarily Stephen’s) isn’t often assessed on numbers and without demonstrable KPIs, they can be vulnerable.

But in designing yardsticks, let’s not underestimate the value of qualitative data and reflection in making valid assessments of success and actually improving the way government does digital.

Comments

Insightful as ever – thanks for this post Steph, lots to think about and echoes many of the discussions we have in our team: management’s desire for big numbers, compared to our preference for measuring effectiveness – even though that is a really slippery-to-grasp concept. The fact that so many of the metrics associated with social media ARE so visible makes people assume you can find out absolutely everything – forgetting that in the traditional world of press monitoring, there was not much beyond knowing the circulation of papers or some mysterious measures for viewing/listening figures. You’ve sparked off a few thoughts (just as Stephen has in his post) – I’ll think on, and post later.

I found the pieceful helpful althought the introduction was a little choppy, not clear you were talking of project managing. The backgrounds of the people really do not matter project management is a generally a process. (not critical criticism).

This is hot topic in Social Media as I can gather from Campaign and established SM community but not sure we have it right here in the UK.

Also I am not sure the majority of evaluation should be qualitave, some people will require more quatative especially MDs at agencies born in the 60s and 70s. They will seek ROI and heap of pie charts to prove it, as there staff create new titles like head of SM in middle management and prof level.

Saying all this in a constructive fashion and will follow the topic some more.

Er, honestly can’t figure out if this is a very clever bot or a person commenting – ?

If it’s a person: just because someone asks for heaps or charts doesn’t mean you need to give them to them. And I don’t think I am talking about project management.

Thanks Julia – I meant to tackle the point that because it’s digital, it’s more easily measurable. There are certainly more metrics available, as you say, but their meaning is dubious. It’s an odd one, because I sometimes find myself using that kind of justification to people and realising my mouth has disengaged from my brain a bit. Evaluating this stuff sensibly is bloody difficult.

You’re right of course. What we were trying to do in our workshop was identify some fairly generic, easy-to-measure things that would give us some indication of how we are doing as a team. But the real value for evaluation comes when you start to evaluate projects or campaigns. It’s more difficult, but it tells you more about your ultimate effectiveness. So for example, it won’t matter at all if we double the volume of referrals to dh.gov.uk if we don’t run an effective campaign to help deliver the transition to a hew health and care system – and we’ll need to identify other ways to evaluate that, which are less about easy-to-measure quantitative metrics and more about evaluating how far we have helped solve policy problems.